KMXD · Independent Project

Meeting Prep Agent: Structuring AI Outputs for Decision-Ready Briefings

A no-code AI workflow experiment built in Zapier exploring how meeting intelligence is generated, structured, and trusted in systems with limited user context. The project examines a core tension in AI workflow tools: useful specificity versus truthful grounding in decision-making outputs.

Try the live AI workflow →Enter a name, company, and meeting context to receive a structured briefing in your inbox.

No 1Overview

What this is

Meeting Prep Agent is a no-code AI workflow built in Zapier that generates structured, decision-ready meeting briefings from a simple input: a person and a company.

Rather than optimizing for summarization, it explores how AI-generated outputs behave when pushed toward decision usefulness under limited and unreliable context.

This project is as much about Zapier's UX gaps as it is about AI outputs.

Why I built this

I noticed a recurring pattern in tools like Zapier and emerging AI workflow builders: they make automation easy, but not intelligence reliable.

Even when outputs were well-formatted, they often failed to support real decision-making when context was incomplete or ambiguous.

This led me to build and test an AI workflow inside Zapier to understand what breaks when we force decision-quality outputs from systems designed only for task automation.

Who it is for

Product and design leads who rely on meeting context to make decisions, but operate in environments where inputs are incomplete, fragmented, and time-constrained.

Core problem explored

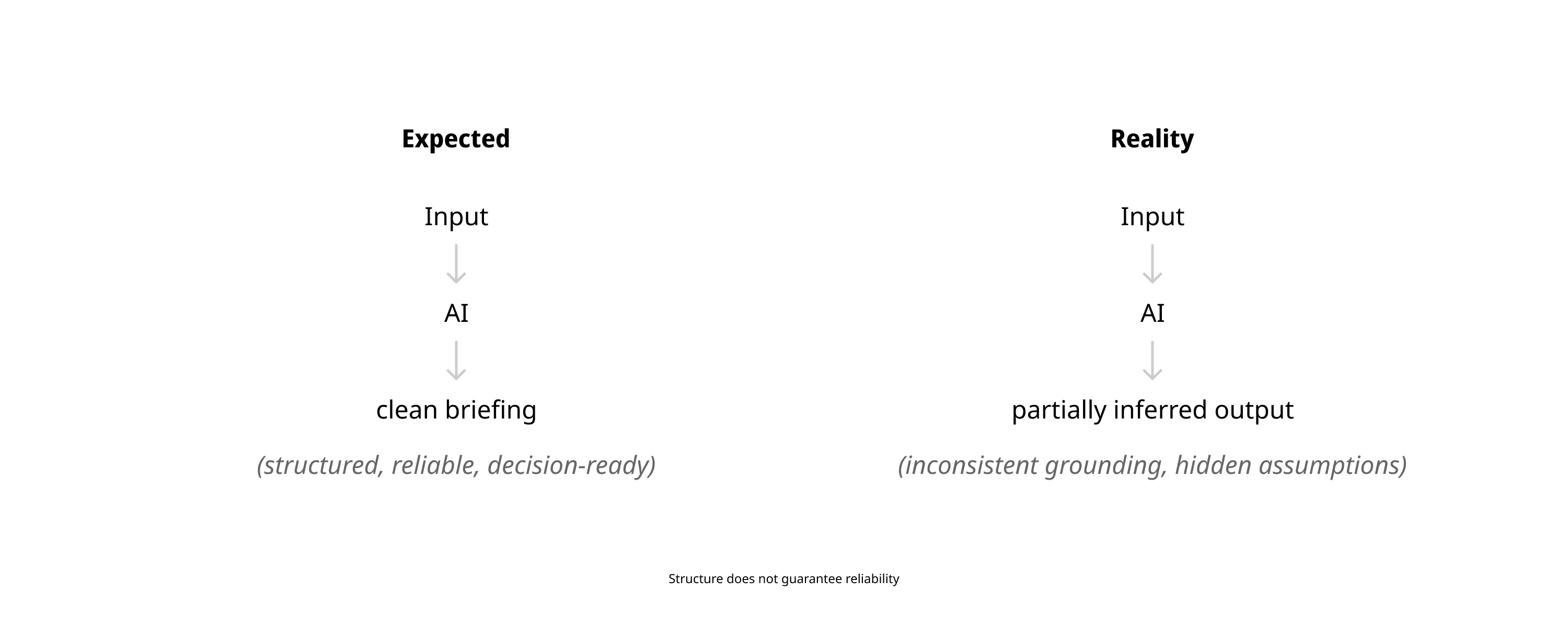

When context is missing, AI systems tend to infer details, over-specify outputs, and produce confident but weakly grounded information.

This creates a gap between useful outputs and trustworthy outputs.

No 2Problem

Modern AI workflow tools like Zapier treat AI as a deterministic step in a linear pipeline. In practice, it introduces reasoning, uncertainty, and variability that the system is not designed to handle.

Core breakdown: AI breaks the workflow mental model

Zapier assumes predictable inputs and outputs. AI breaks this assumption: the same input can produce different outputs, and correctness is not structurally guaranteed.

Prompt becomes the system design layer

The workflow collapses into a single prompt field with no native support for reasoning structure or validation. Users are forced to manually simulate system design inside natural language instructions.

No visibility into reasoning or failure

When outputs fail, the system provides no insight into what was inferred or missing. Debugging becomes guesswork rather than system feedback.

Key takeaway

Zapier extends automation into AI, but does not extend UX to support uncertainty, reasoning, or trust.

No 3System Failures

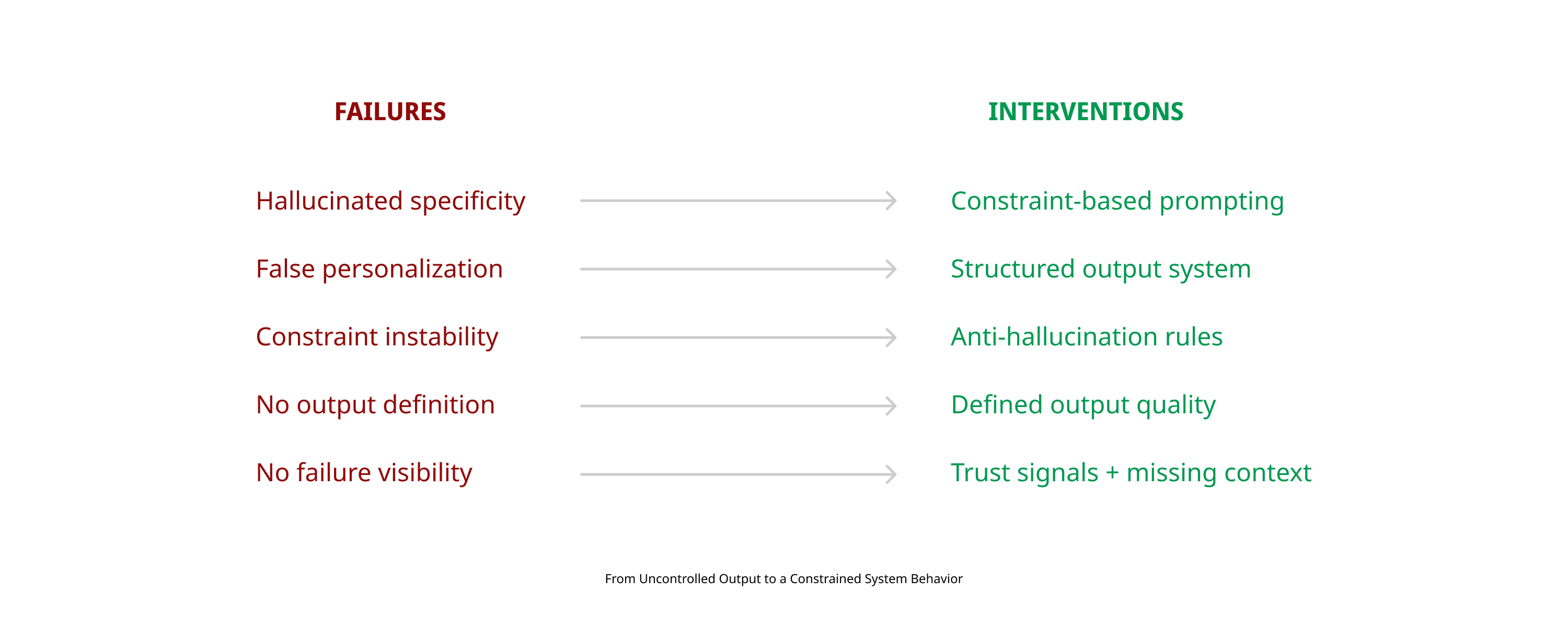

These failures were not random. They emerged consistently across inputs and iterations, pointing to structural behaviors rather than fixable bugs.

Hallucinated specificity

When context was missing, the system filled gaps with confident but ungrounded details.

→ Useful tone, unreliable truth

False personalization

Structured outputs created an illusion of user-specific insight without real grounding.

→ Feels tailored, but is generically inferred

Output instability

Small prompt changes produced large shifts in output structure and quality, with no stable reference for what a correct output looked like.

→ Outputs oscillated between generic and overly specific across runs

No failure visibility in workflow

Zapier marked workflows as successful even when outputs were weak or unreliable.

→ Failure is invisible at system level

Key insight

These are not isolated bugs. They are structural behaviors of combining probabilistic AI with deterministic workflow systems.

No 4Design Interventions

Shift from Output Generation to Output Governance

The system is not designed to "sound smart"

It is designed to stay correct under uncertainty

Prompt as System Layer

The prompt is treated as a governed system layer, not a one-off input

→ Explicit rules define what the system can and cannot say

→ Encodes behavior, constraints, and structure across runs

→ Reduces instability and prevents fabricated specificity

Anti-Hallucination Logic

The system is instructed to default to "unknown" over guessing

→ No inferred roles unless explicitly stated

→ No invented company or personal details

Trust Signal Layer

Every key statement is labeled with confidence

→ 🟢 Known fact

→ 🟡 Inference

→ 🔴 Missing or uncertain

→ Makes reasoning visible to the user

Explicit Missing Context

Gaps are surfaced, not hidden

→ "Role unknown" becomes part of the output

→ Enables better human judgment instead of blind trust

Structured Output with Defined Quality

Output is broken into predictable, scannable blocks

→ Quick Prep, Actions, Leverage, Context, Trust

→ Good output is not subjective: skimmable in 60 seconds, facts separated from assumptions, every section drives toward action

Making Failure Legible

Failures are designed to be visible in the output

→ Missing data, low confidence, weak signals

→ Turns black-box behavior into inspectable output

These same interventions informed real product work.

At ChatFin, where I work as Principal Product Designer, I applied the same principles to the platform's AI agent assist: adding trust signal layers, surfacing missing context explicitly, and structuring outputs for faster financial decision-making.

The challenge in both cases was identical: how do you make AI output useful without making it dishonestly confident?

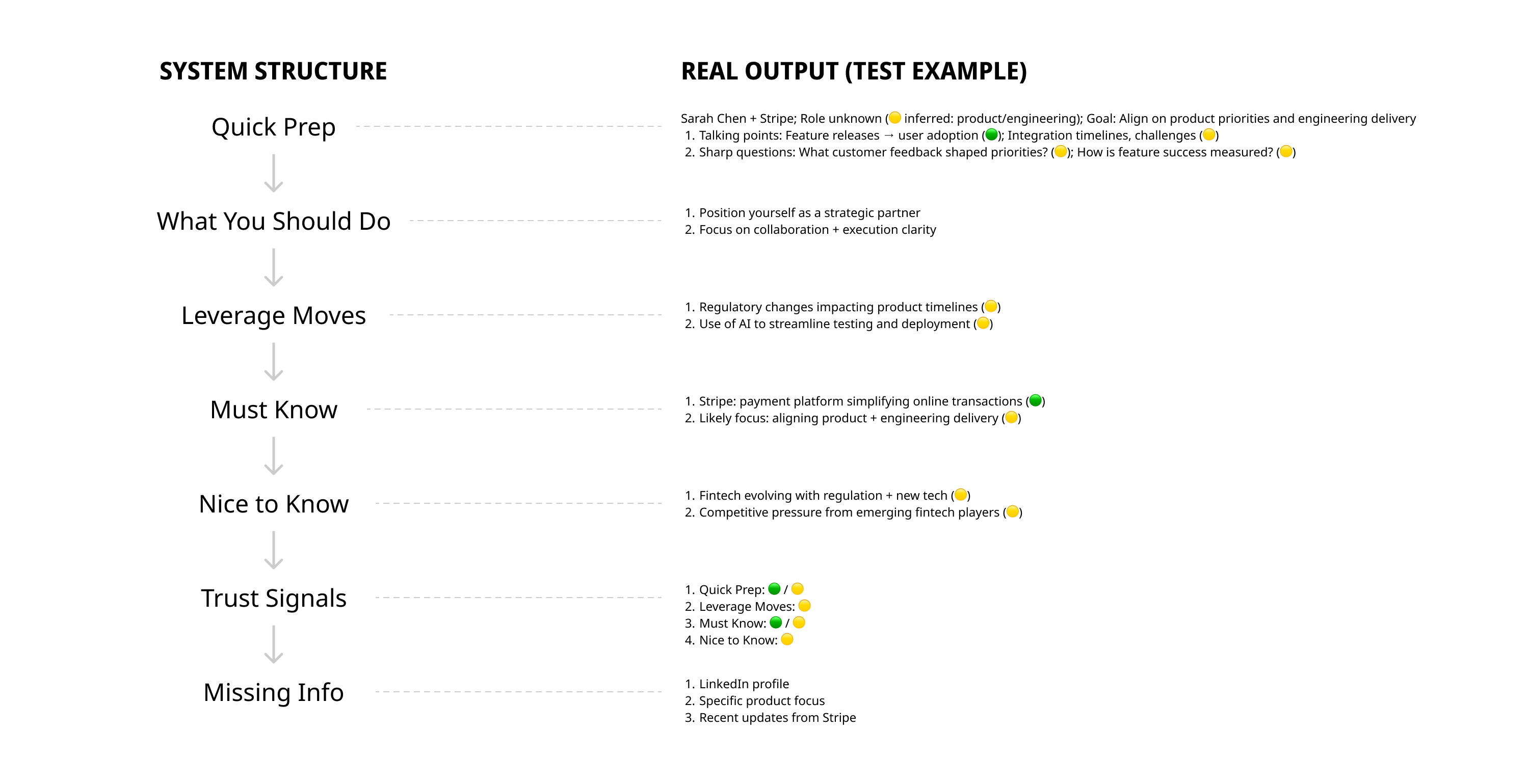

No 5Final System

From Prompt to System Behavior

What started as a single prompt evolved into a governed output system

→ Behavior is now predictable, inspectable, and constrained

End-to-End Workflow

Trigger → AI Processing → Structured Email Output

→ Simple pipeline, tightly controlled at the generation layer

Designed for 30 to 60 Second Consumption

Output prioritizes speed over depth

→ Key signals surface instantly

→ No dense paragraphs, no cognitive overload

Structured for Action, Not Just Insight

Every section drives toward a decision

→ "What You Should Do" is the core, not an afterthought

Transparent and Stable by Default

The system exposes its reasoning through trust signals and explicit gaps

→ Handles ambiguity without breaking

→ Degrades gracefully when data is missing instead of fabricating details

Live Prototype

The final system is fully functional and publicly accessible

→ Built in Zapier using Tally, AI by Zapier, and Email by Zapier

→ Handles variable input quality including missing LinkedIn and vague context

→ Delivers structured briefings to any email in under 2 minutes

System Structure to Real Output Mapping

Designed for 30 to 60 second decision-making

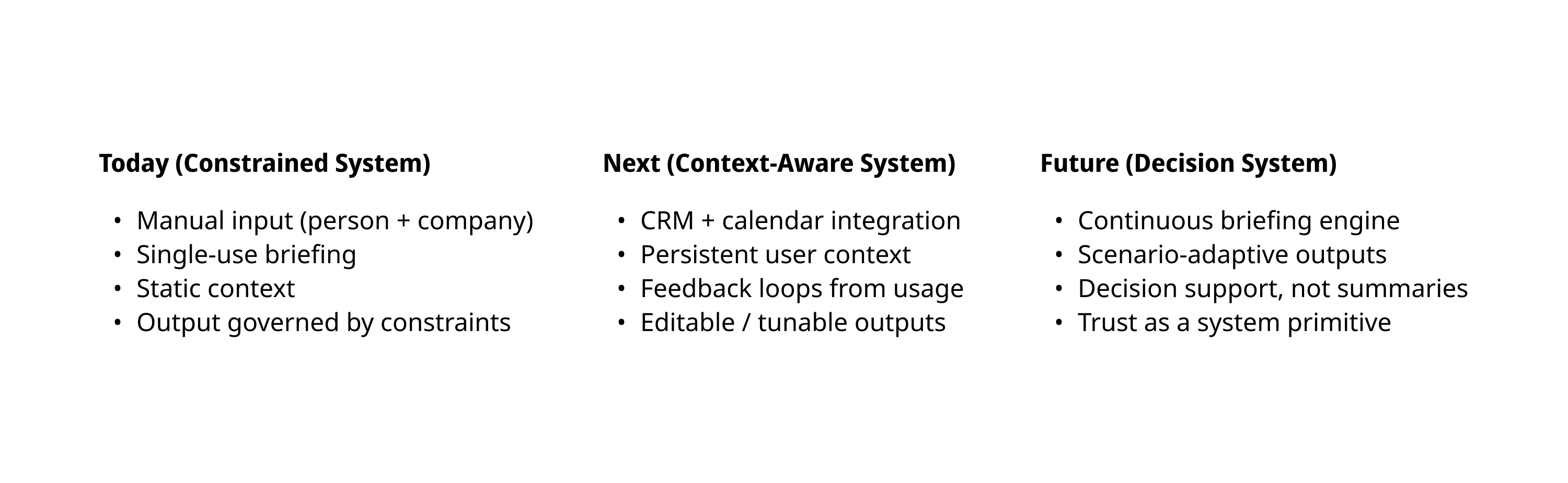

No 6Future Vision

From Event-Based to Continuous Intelligence

The current system is triggered by a single form submission

→ Future systems pull from CRM, calendar, and interaction history

→ Context becomes a continuously updated layer, not a one-time input

From Static Output to Adaptive Briefings

Output should adjust based on meeting type and user intent

→ Sales, hiring, investor, internal sync

→ Same system, different framing

Personalization Without Fabrication

Move from guessed personalization to real signals

→ Past interactions, known preferences, actual data

→ Trust increases as system earns context

User Actions as System Signals

What users edit, ignore, or act on becomes training data

→ Inline editing lets users shape output before it is finalized

→ System improves relevance over time through real usage patterns

From Output to Decision Support

The goal is not better summaries

→ It is better decisions in less time

→ Briefings evolve into actionable guidance systems

Trust as a First-Class System Layer

Confidence, sources, and uncertainty become core primitives

→ Not a feature added later

→ Foundation for adoption in enterprise environments

Beyond Zapier

The principles built here extend beyond the tool

→ Output governance, trust signaling, and anti-hallucination logic apply directly to CRM workflows, sales intelligence, and internal AI systems

This project is live. Try it, break it, and see what the system does with what you give it.

Try the live AI workflow →