Stackwise: Tool Evaluation as a Shared Decision Experience

A conceptual-to-MVP product exploration reframing how product and design leaders evaluate, choose, and justify software and AI tools, with clarity, alignment, and institutional memory.

Try the live Stackwise toolNo 1Overview

What Stackwise is

Stackwise is an exploration of how product and design teams evaluate and choose software tools before purchase, especially in fast-moving AI categories where decisions are pressured, cross-functional, and difficult to revisit.

Who it's for

The primary audience is product and design leads who own tool decisions but lack a repeatable way to evaluate options, align stakeholders, and preserve the reasoning behind a final call.

The core problem

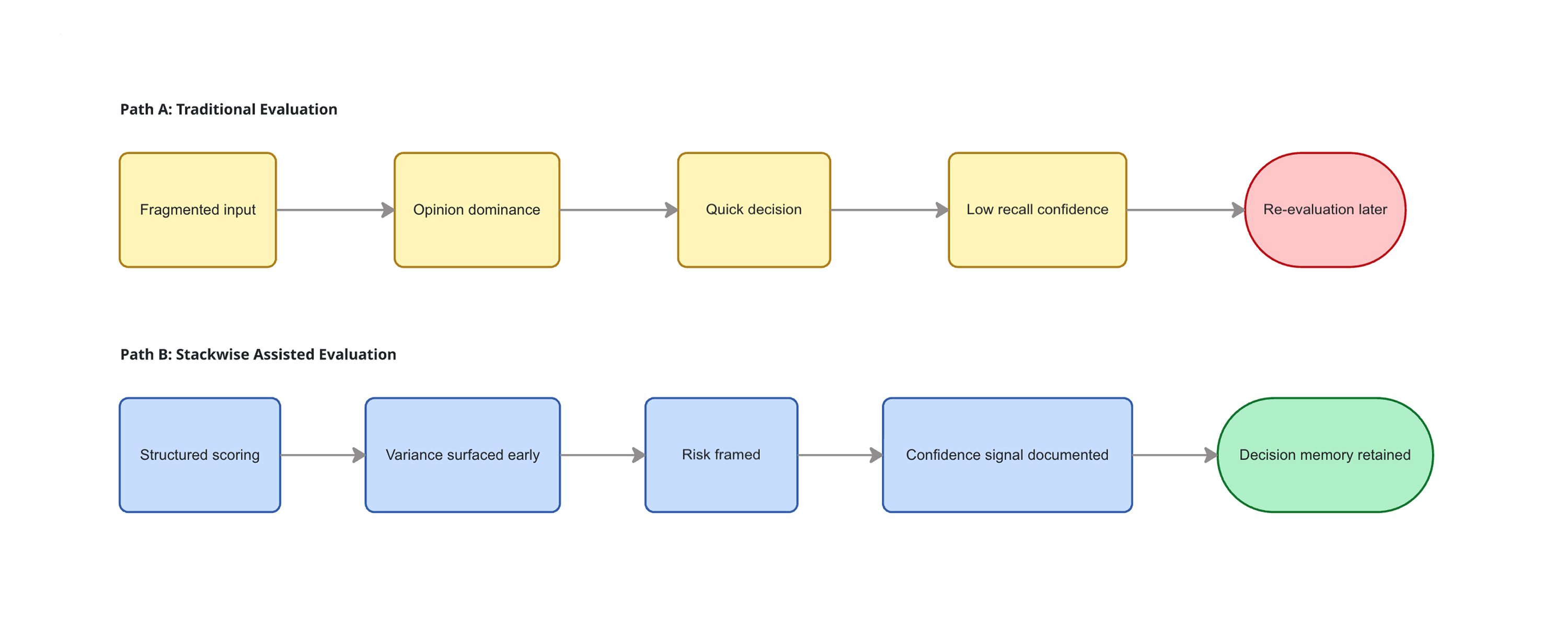

Tool decisions are often made through scattered spreadsheets, Slack threads, and demos, with no shared framework and no institutional memory. As a result, teams repeatedly re-litigate the same choices every six months, under increasing time and budget pressure.

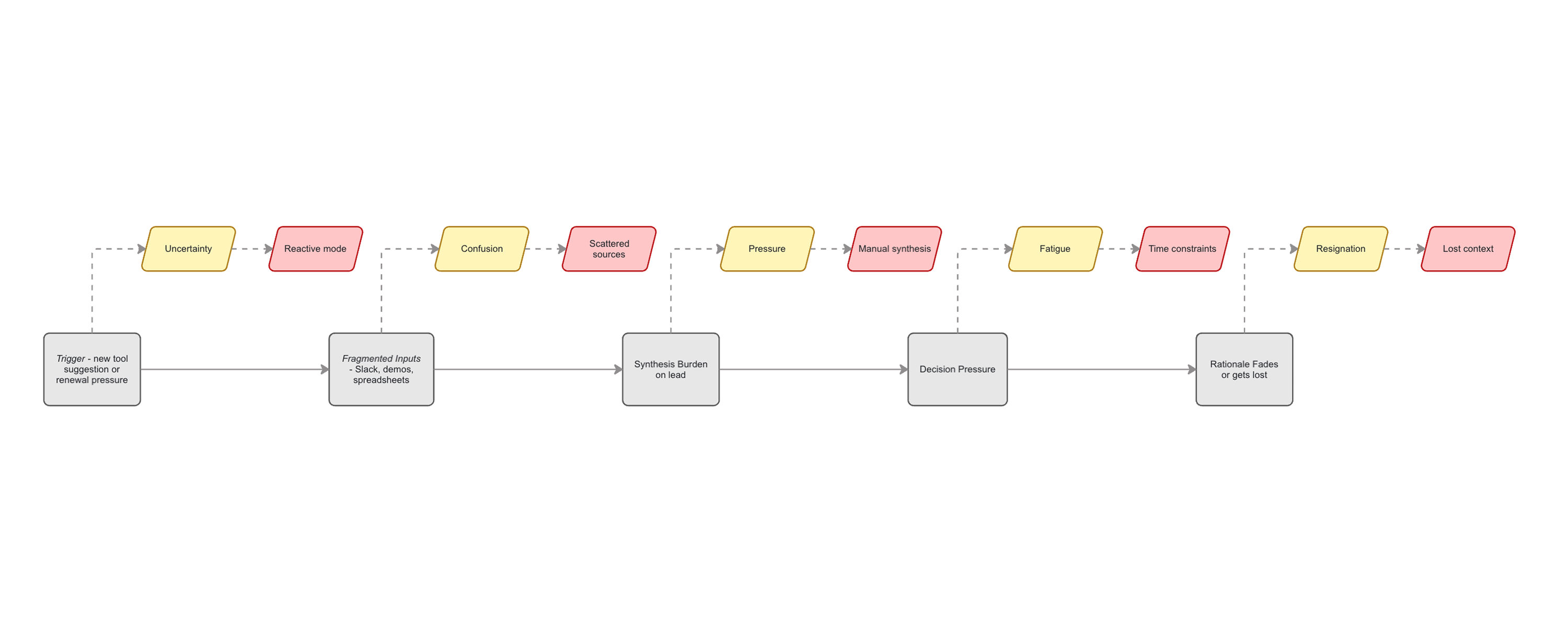

No 2Problem

Modern product teams are expected to move quickly, adopt new tools confidently, and justify spend, often simultaneously.

But the experience of evaluating tools has not evolved with the pace of software innovation. Decisions are still made through fragmented conversations, demos, and documents that were never designed to support collective judgment.

What should be a deliberate decision experience instead becomes a reactive moment, optimized for speed, not clarity.

Key pain points

• No shared evaluation frame:

Each stakeholder evaluates tools through their own lens, making alignment slow and fragile.

• Decisions lack memory:

Once a tool is chosen, the reasoning behind it quickly disappears, forcing teams to re-evaluate from scratch months later.

• High cognitive load:

Product and design leads act as de-facto synthesizers, absorbing demos, opinions, and constraints with little support.

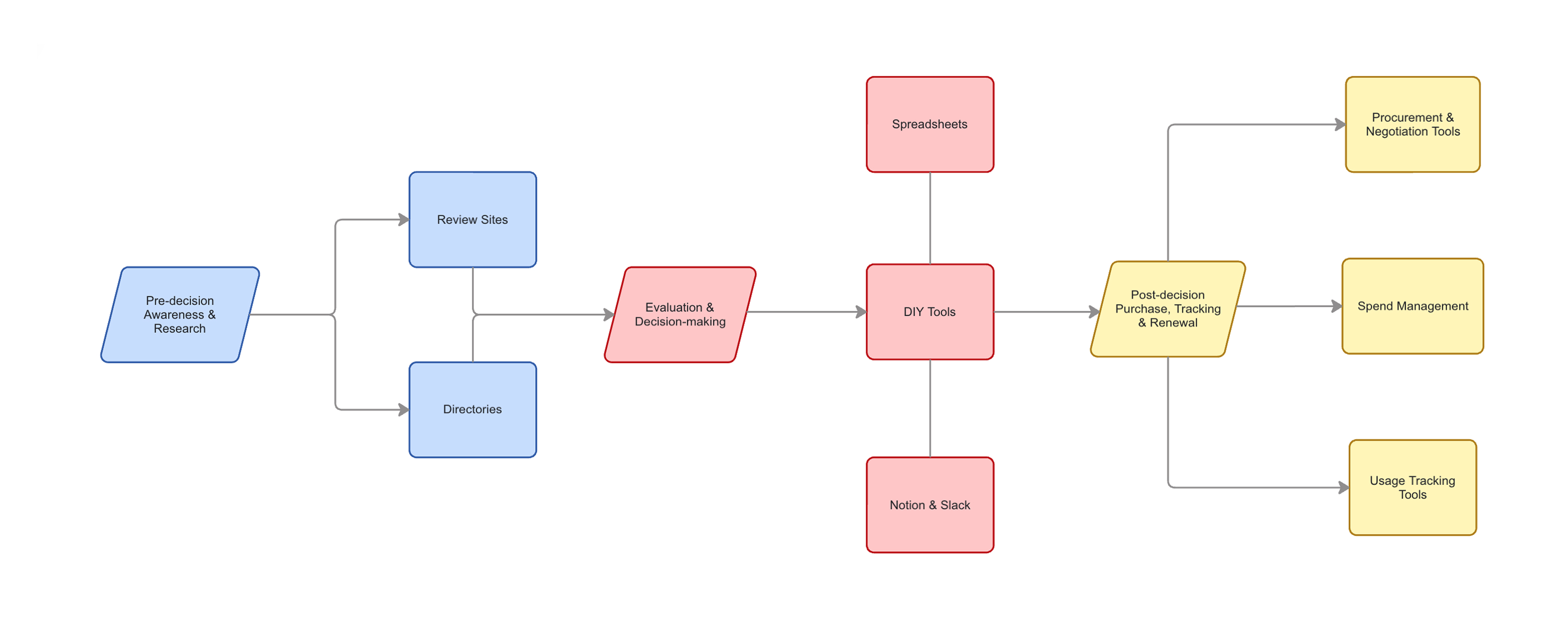

No 3Competitive Landscape

The software ecosystem already includes many tools that touch parts of the tool-selection lifecycle, but none are designed around the lived experience of making the decision itself.

Most existing solutions optimize for what happens before or after a choice is made: discovering tools, negotiating contracts, tracking spend, or monitoring usage. The evaluation moment, where teams align, compare, and commit, is treated as an ad-hoc gap teams must bridge themselves.

What exists today

SaaS spend management tools focus on license tracking, usage analytics, and cost visibility after a tool is already adopted.

Procurement platforms help with pricing benchmarks and negotiations, but assume the decision has already been made.

Review sites and AI tool directories surface peer opinions and feature lists, but can't account for team-specific workflows, constraints, or trade-offs.

In practice, teams fill the gap with spreadsheets, Notion docs, and Slack threads, assembling a temporary decision process that disappears once the choice is made.

The gap

What's missing is infrastructure for the decision experience itself: a structured way to define criteria, collect cross-functional input, compare options meaningfully, and preserve the reasoning behind a choice.

This gap becomes especially costly with AI tools, where capabilities, pricing models, and quality shift rapidly, turning one-off evaluations into fragile bets that must be revisited again and again.

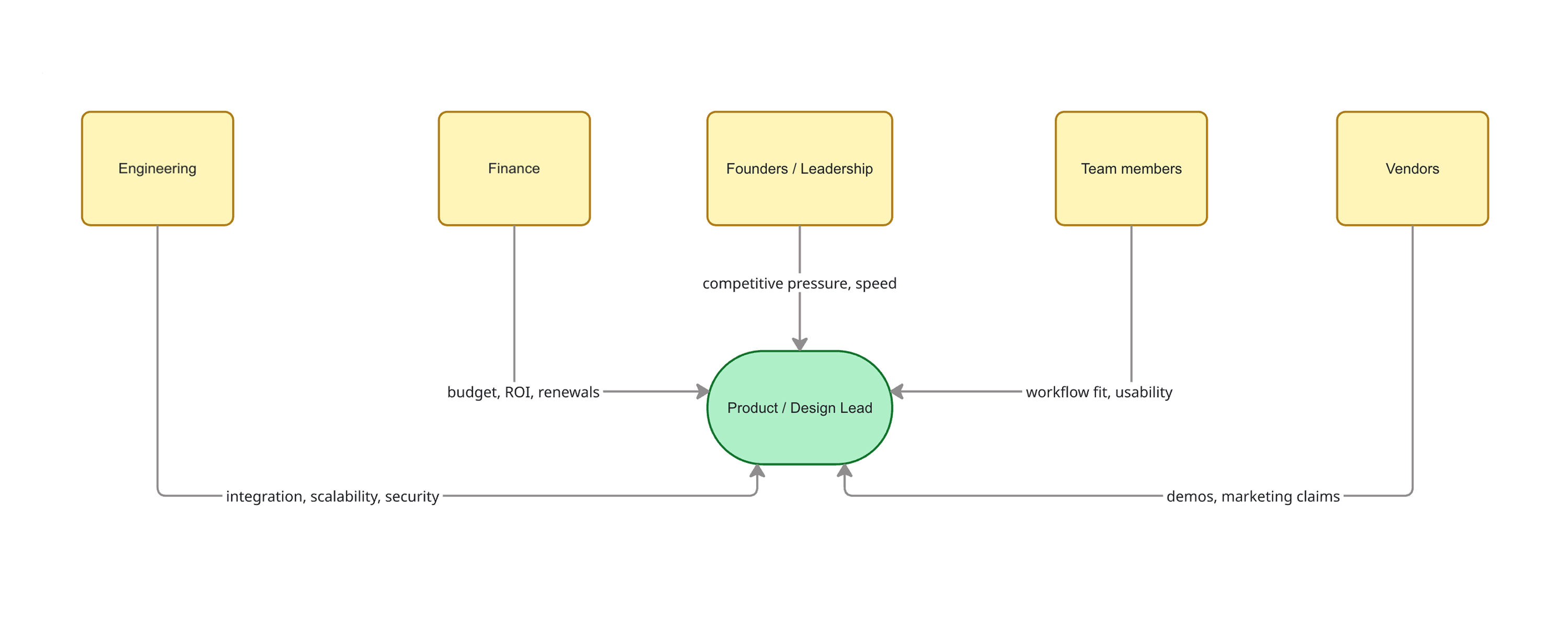

No 4Users

The pressure of tool decisions does not fall evenly across an organization. It concentrates on the people responsible for delivery and outcomes.

Primary: Product & Design Leads

Product and design leaders sit at the intersection of urgency, expectation, and constraint. They are expected to evaluate new tools quickly, maintain team velocity, and defend decisions to leadership, often without a shared framework to guide the process.

They absorb stakeholder opinions, vendor demos, cost questions, and integration risks, acting as synthesizers under time pressure. When a tool succeeds, it blends into the workflow. When it fails, accountability points back to them.

Secondary: Founders & Finance Leaders

Founders and finance leaders influence decisions through budget authority and strategic priorities. They care about ROI, predictability, and competitive positioning.

However, they typically operate downstream of evaluation. They validate and approve, but do not run trials, collect team input, or reconcile trade-offs firsthand.

Stackwise centers the experience of the primary decision owner, while making cross-functional input structured, visible, and durable.

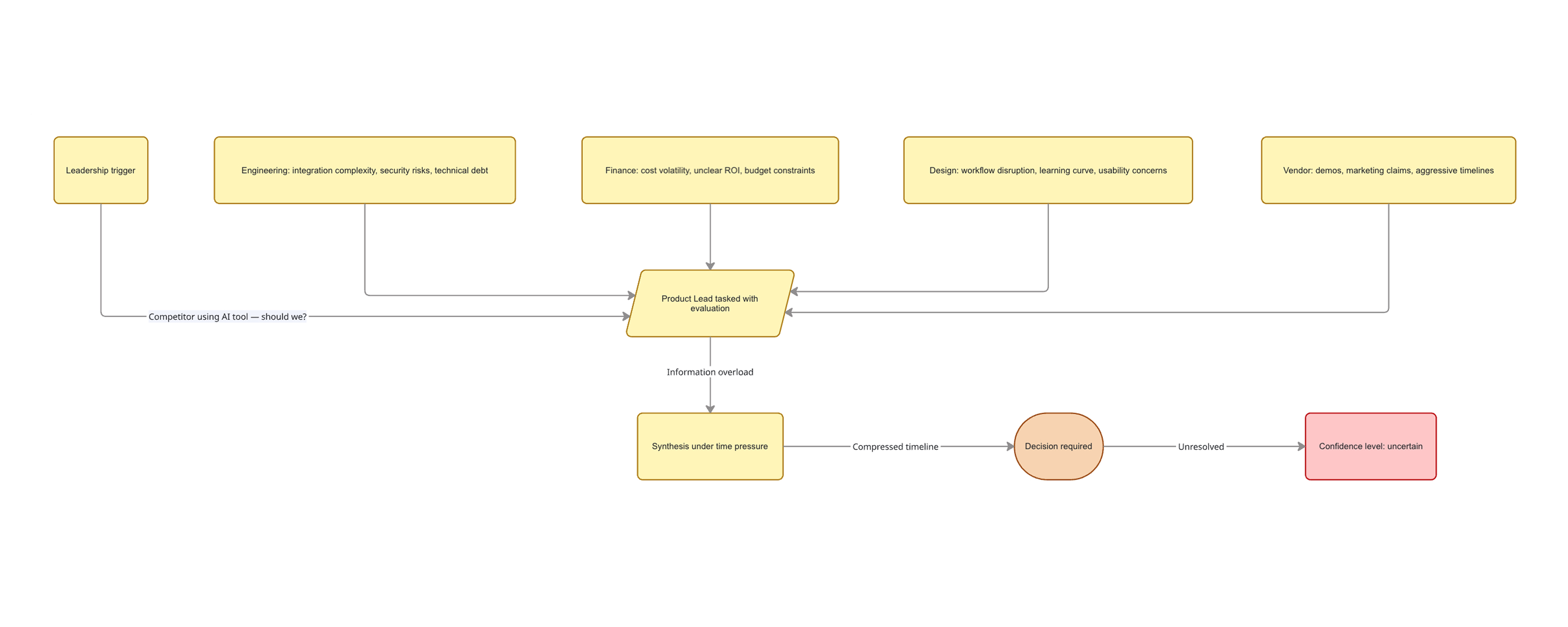

No 5Use case

To ground the problem, consider a real scenario: leadership hears that competitors are shipping faster using AI-powered coding assistants.

A message lands in Slack: "Should we be using this? What's our plan?"

The product lead is now responsible for evaluating:

• Is this real productivity gain or hype?

• Which tool among many should we test?

• Will short trials reflect real production workflows?

• How do we measure impact credibly?

Engineering cares about integration and security.

Finance asks about token-based pricing volatility.

Designers want usability.

Leadership wants speed.

Without a structured evaluation process, this becomes another spreadsheet, another Slack thread, and another decision made under pressure, with little memory of how it happened.

The desired outcome

A clear, defensible recommendation, with shared criteria, visible trade-offs, and documented rationale that can stand up to renewal, audit, or re-evaluation months later.

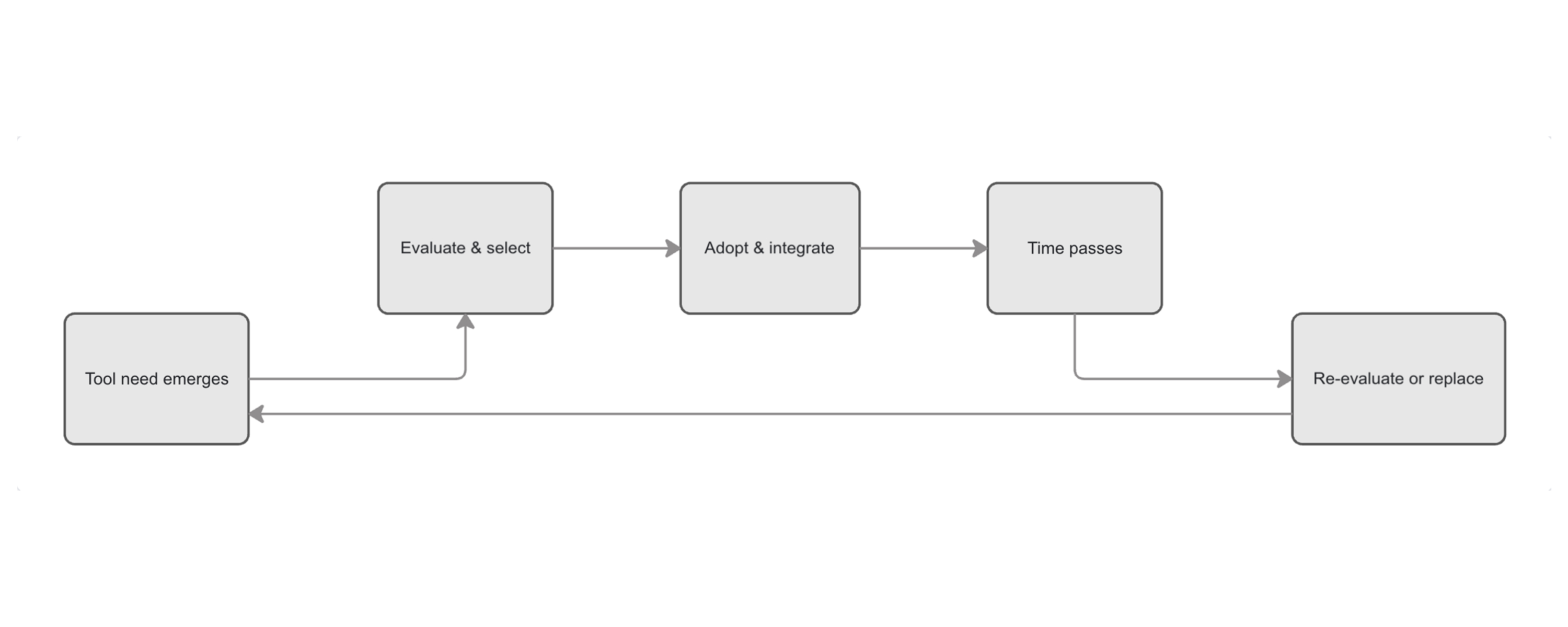

No 6Flows

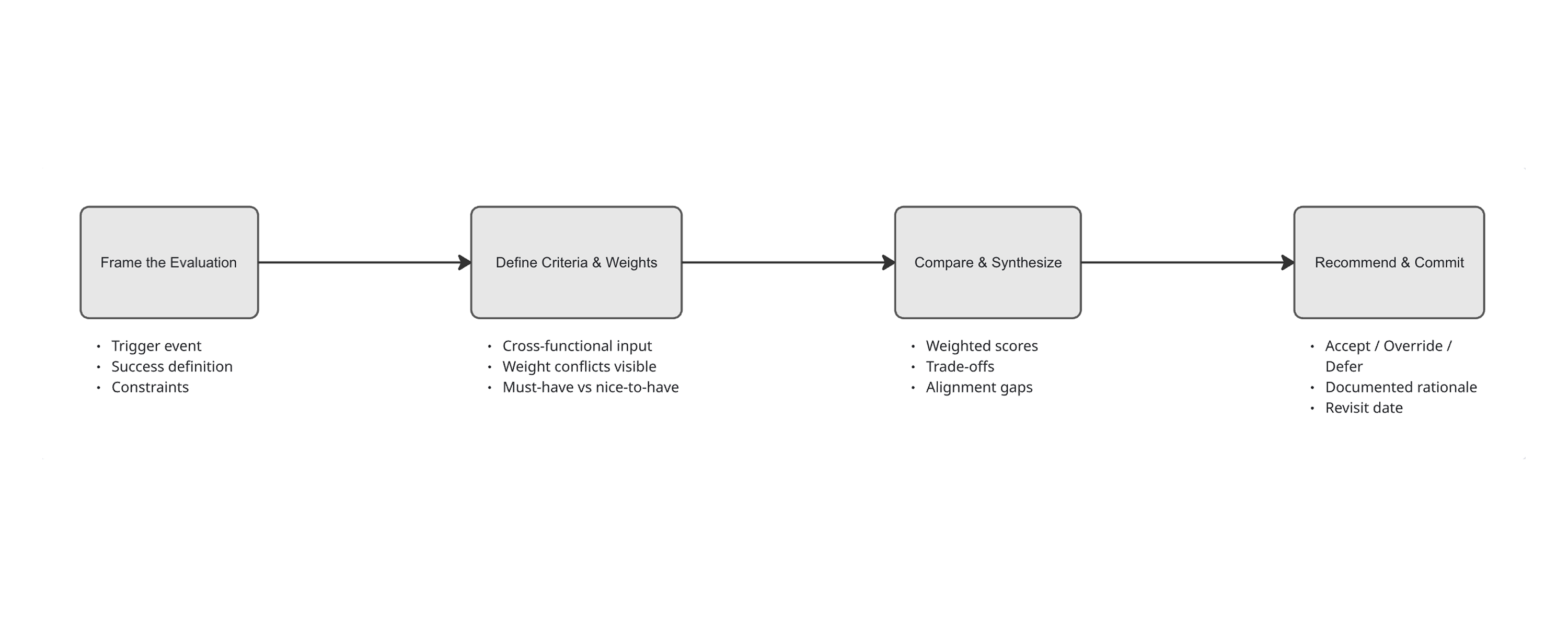

Stackwise treats tool evaluation as a structured workflow, not a one-off document.

The flow below models a single high-stakes evaluation: from framing the decision to committing to a recommendation.

1. Frame the Evaluation

Define why this decision exists, what success looks like, and which constraints matter before any tools are scored.

2. Define Criteria & Weights

Make evaluation dimensions explicit across product, engineering, design, security, and finance, reducing hidden assumptions and post-hoc bias.

3. Compare & Synthesize

Tools are evaluated against shared criteria, surfacing trade-offs, outliers, and alignment gaps.

4. Recommend & Commit

A recommendation is formed with documented rationale, accepted, overridden with justification, or deferred with a revisit date.

The result is not just a selected tool, but a durable decision record.

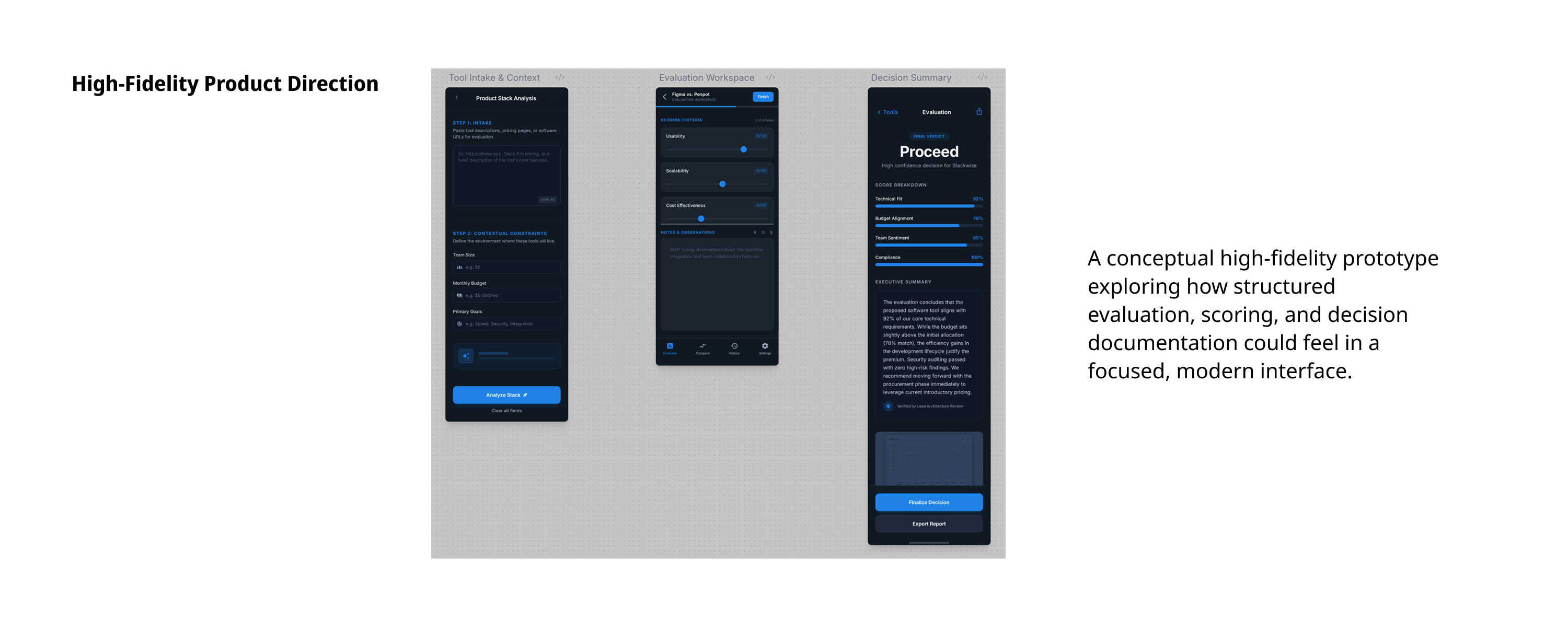

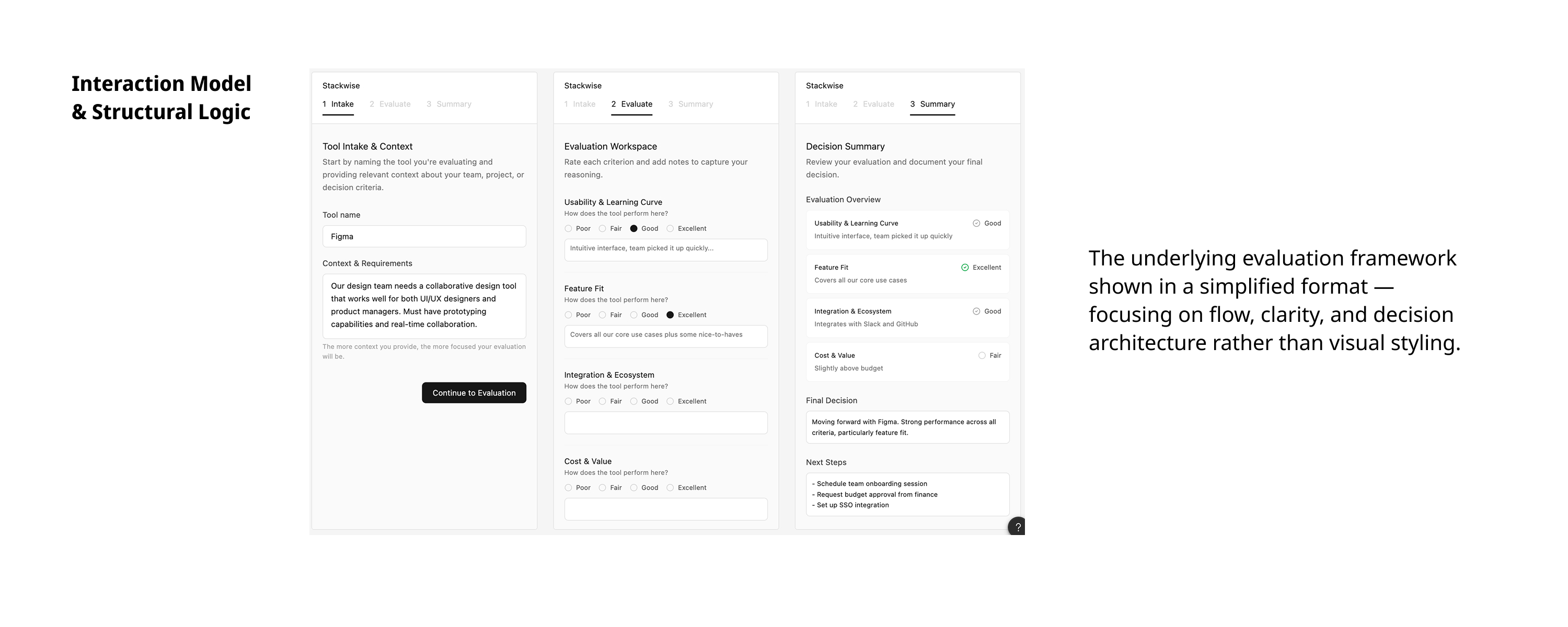

No 7UI Concepts

The interface is designed around one core idea: the decision moment should feel structured, visible, and defensible.

Rather than acting as a spreadsheet replacement, Stackwise behaves like a guided decision workspace, framing criteria, surfacing trade-offs, and making alignment (or disagreement) explicit.

Main Flow: From Criteria to Commitment

The primary experience moves across three screens:

• Criteria & Weights — define what matters before tools are scored.

• Comparison & Synthesis — see weighted results and alignment gaps.

• Recommendation & Commitment — accept, override, or defer with rationale.

Each step reduces ambiguity while preserving decision memory.

Structural Exploration

Multiple structural approaches were explored, including stacked comparison layouts, role-filtered views, and alternative ranking logic, to test how clearly trade-offs and alignment could be surfaced.

The chosen structure prioritizes: visibility of criteria, transparency of weighting, and clear transition from analysis to commitment.

Interaction principles

• Criteria must be locked before comparison begins to prevent post-hoc bias.

• Outlier stakeholder scores are surfaced automatically to reveal hidden disagreement.

• Overrides require written justification to preserve institutional memory.

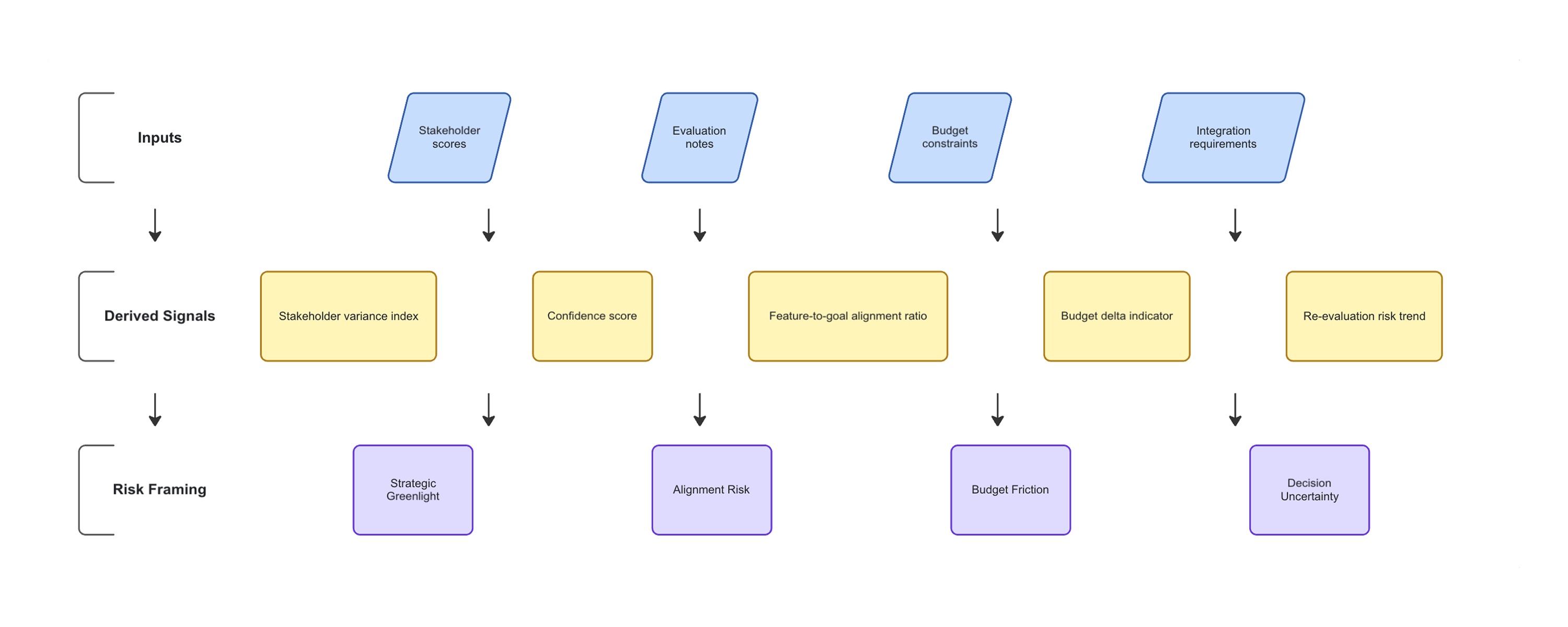

No 8Metrics & Signals

Structured evaluation creates clarity, but clarity alone does not surface risk.

Stackwise introduces decision signals derived from evaluation behavior, scoring patterns, and contextual constraints. The goal is not to automate judgment, but to make invisible tradeoffs visible before a final call is made.

What would be measured and why

• Stakeholder variance:

Score dispersion across roles reveals hidden disagreement early.

• Confidence index:

Combines scoring consistency, note depth, and evaluation duration.

• Feature-to-goal alignment:

Maps tool capabilities against stated team objectives.

• Budget delta:

Highlights financial friction before procurement escalation.

• Re-evaluation frequency:

Tracks how often decisions are revisited, a proxy for institutional memory failure.

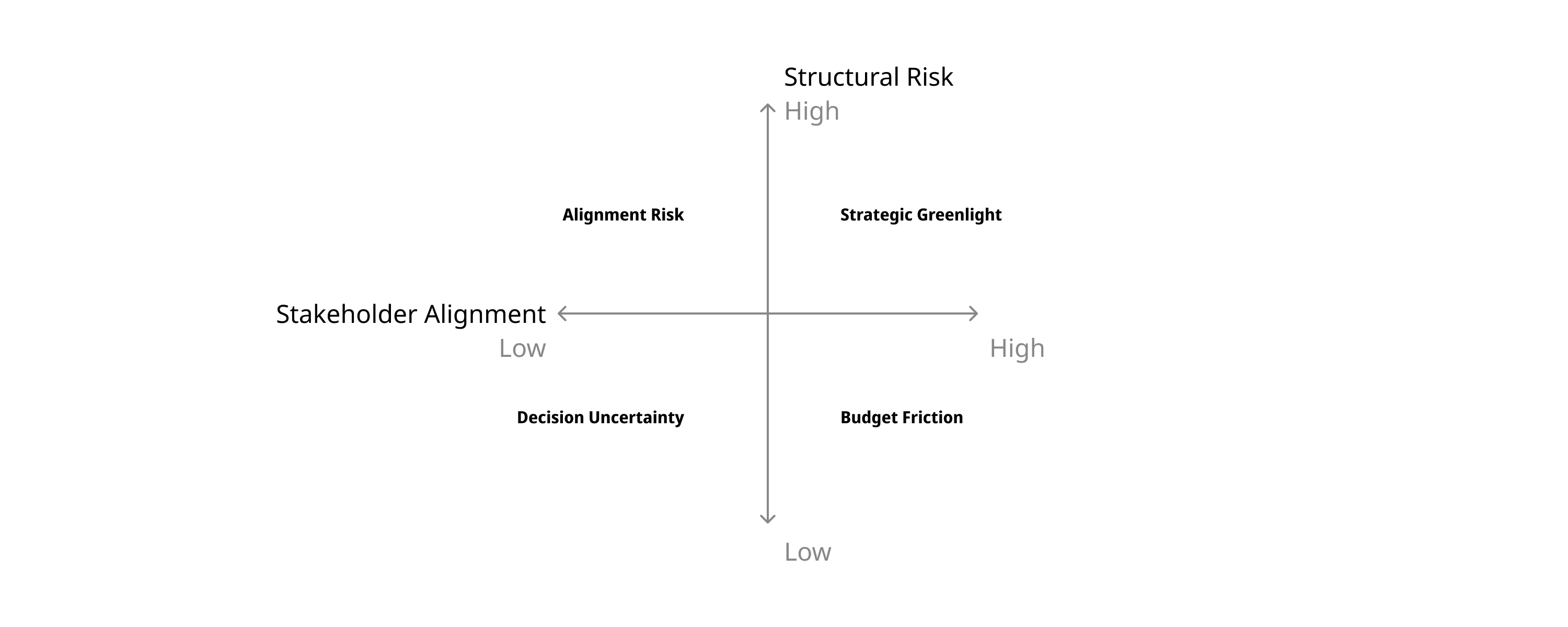

Risk profiles

Instead of a binary "Proceed / Don't Proceed," Stackwise frames decisions through contextual risk signals.

• Strategic Greenlight:

High alignment, low variance, documented rationale.

• Alignment Risk:

Significant scoring gaps between product, design, and engineering.

• Budget Friction:

Strong feature fit but financial tension relative to constraints.

• Decision Uncertainty:

Low note depth, short evaluation cycles, weak confidence signals.

The intent is not to replace human judgment, but to surface structural blind spots before they become organizational regret.

No 9Prototype

The prototype explores how structured evaluation evolves into assisted decision-making, without removing human judgment from the loop.

AI is not positioned as a replacement for expertise, but as a reflective layer that surfaces patterns teams may overlook under time pressure.

Figma prototype scope

• Tool intake with contextual constraints

• Structured evaluation workspace

• AI-derived confidence and variance signals

• Risk-framed decision summary

• Exportable executive-ready rationale

How it would be tested

• Scenario-based walkthroughs with product and design leads

• Compare assisted vs non-assisted evaluation sessions

• Measure perceived clarity and alignment confidence

• Track decision recall accuracy after 30 to 60 days

The goal of testing is not visual validation, but behavioral validation: Does structured intelligence reduce decision fatigue and re-litigation?

Try the live toolThe concepts above explore an early product direction, a dark-mode mobile interface with AI-assisted scoring. The live tool reflects a deliberate shift: a lighter, more accessible web experience optimized for team use, with the same core evaluation logic intact. Building it live was the next step in validating the concept beyond static screens.

No 10Outcomes

Stackwise is not just about choosing tools better. It's about increasing an organization's decision maturity over time.

Value Model

• Reduced re-evaluation cycles

• Faster stakeholder alignment

• Clear rationale for finance & leadership

• Lower cognitive load for product & design leads

• Long-term institutional memory

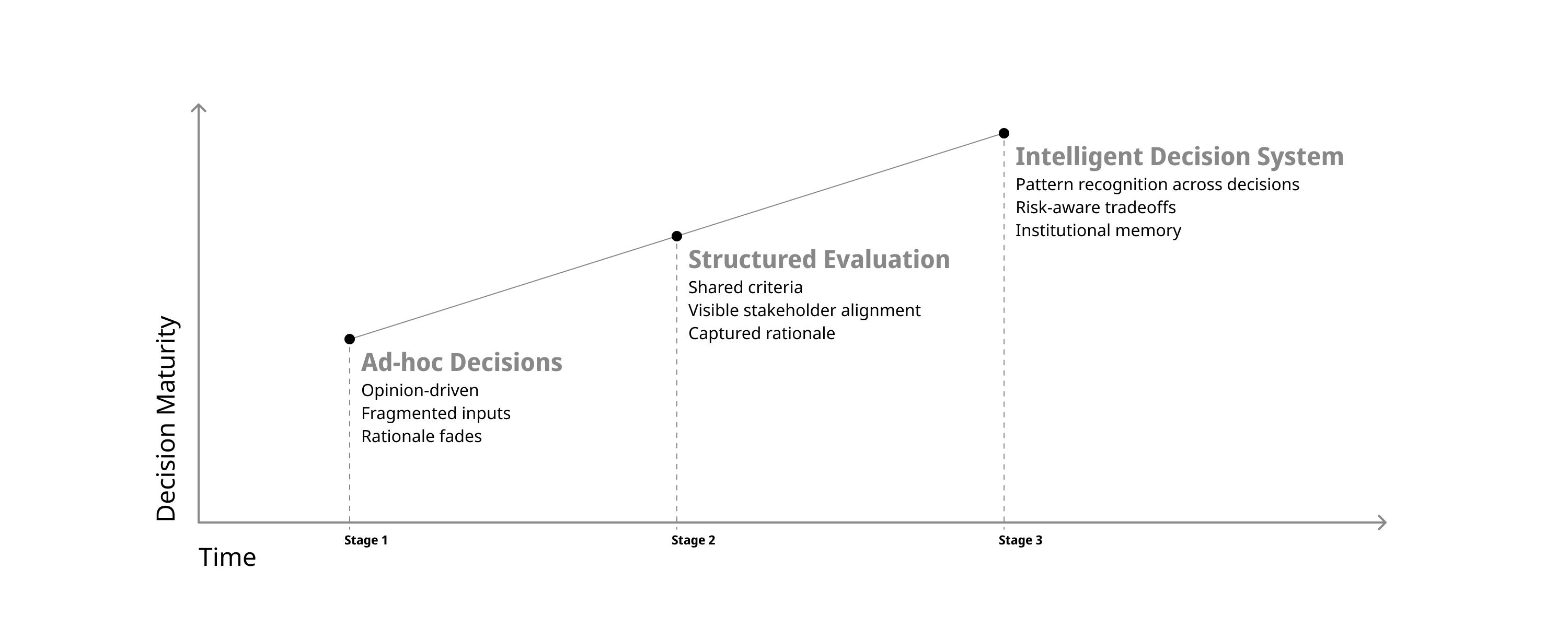

How Workflow Evolves

Instead of reacting to tool pressure every few months, teams build a repeatable decision muscle. Over time, evaluation becomes less about urgency and more about informed tradeoffs.

The shift is subtle but powerful: decisions move from opinion-driven and fragmented to structured, transparent, and increasingly intelligent.

No 11Archive

Stackwise evolved through structured research, systems thinking, and iterative refinement, using AI tools as collaborative partners in the design process.

Concept & Research

• Claude: persona modeling, decision-framing exploration, structured reasoning, and iterative prompt-based research throughout the concept phase

• Cursor: research synthesis, competitive scan summaries, and document refinement

• Miro AI: experience flows and systems visualization

• Google Stitch & Figma Make: rapid UI concepts and interactive prototypes

Build & Ship

• Claude: product logic consulting, UX decision-making, prompt writing, and step-by-step build guidance throughout development

• Lovable: AI-assisted React app generation, iterative UI building, and one-click deployment

• GitHub: version control and code ownership

For a deeper look at the raw research, working notes, and iterative drafts, explore the full Stackwise working document below: